Algorithmic Transparency and Explainable AI (XAI): Navigating GRC Requirements in 2026

By the second quarter of 2026, the era dominated by “Black Box” algorithms has officially ended. As global businesses incorporate Large Language Models (LLMs) and self-governing decision-making systems into their key processes—ranging from credit evaluations to medical assessments—the need for Algorithmic Transparency has shifted from being just an ethical concept to a strict requirement under GRC (Governance, Risk, and Compliance) regulations. With the introduction of the EU AI Act and the newly established ISO/IEC 42001 (AI Management System) criteria, organizations are mandated to demonstrate not only the functionality of their AI systems but also the rationale behind specific outcomes. This move towards Explainable AI (XAI) now represents the forefront of corporate responsibility, ensuring that machine-driven decisions can be scrutinized, impartial, and legally justifiable.

The implementation of XAI in 2026 poses a multifaceted technical obstacle that necessitates a fundamental overhaul of the machine learning process. This transformation involves shifting from using obscure, high-parameter models to structures that provide “Local Interpretability” and “Global Transparency.” For Chief Risk Officers (CROs), the capacity to furnish an “Explanation Map” for an automated decision is essential in mitigating the growing risks associated with algorithmic lawsuits and regulatory penalties. This article delves into the technical frameworks of XAI in 2026 and the GRC approaches needed to uphold transparency in an increasingly automated business environment.

1. The End of the Black Box: Why Transparency is Non-Negotiable

In 2026, the “Black Box” problem—where the internal logic of a model is hidden even from its creators—is viewed as a systemic risk. Regulators now categorize AI systems based on their “Explainability Score.”

- High-Stakes Decisioning: In 2026, AI systems used in hiring, lending, or law enforcement must provide a human-readable explanation for every output. If a loan is denied, the XAI system must identify the specific data points (e.g., “debt-to-income ratio combined with recent payment volatility”) that triggered the decision.

- Liability and Recourse: Transparency is the foundation of legal recourse. Without a clear audit trail of an algorithm’s logic, an organization cannot defend itself against claims of bias or error, leading to astronomical legal liabilities.

- Model Trust: For stakeholders and customers, transparency is the primary driver of adoption. An AI that can explain its reasoning is an AI that can be trusted with institutional assets.

2. Technical Pillars: SHAP, LIME, and Integrated Gradients in 2026

To satisfy the 2026 GRC requirements, data scientists are utilizing advanced interpretability techniques to “Open the Box”:

- SHAP (SHapley Additive exPlanations): A game-theoretic approach that assigns each feature an “Importance Value” for a particular prediction. In 2026, SHAP values are the standard for proving which variables influenced an AI’s decision in financial auditing.

- LIME (Local Interpretable Model-agnostic Explanations): Used to understand individual predictions by perturbing the input data and seeing how the model reacts. This provides “Local Transparency” for specific customer queries.

- Counterfactual Explanations: This technique tells the user: “If your income had been $5,000 higher, your application would have been approved.” This is the gold standard for Consumer Rights 2026 compliance.

Comparison: Black Box AI vs. Explainable AI (XAI) 2026

| Feature | Black Box AI (Legacy) | Explainable AI (XAI – 2026) |

| Logic Visibility | Opaque / Hidden | Transparent / Human-Readable |

| Regulatory Status | High Risk / Prohibited | Compliant (ISO/IEC 42001) |

| Audit Process | Input-Output Testing | Deep Forensic Logic Audit |

| Bias Mitigation | Reactive (Post-failure) | Proactive (Feature Weight Audit) |

| Liability Profile | High (Hard to defend) | Defensible / Documented Logic |

| TBM/CPC Potential | $200 – $350 | $550 – $850+ |

3. Algorithmic Auditing: The New GRC Frontier

During the fiscal year 2026, Independent Algorithmic Auditing has emerged as a lucrative industry worth billions of dollars. These audits extend beyond conventional software testing to assess the code’s “Ethical and Mathematical Soundness.”

- Auditability by Design: 2026 AI infrastructures are built with “Tracing Layers” that record the internal weights and activation functions of a model during the decision-making process.

- Drift Monitoring: GRC platforms now include “Transparency Dashboards” that monitor for Model Drift. If an AI’s logic starts to shift away from its original training parameters, the system triggers an automatic “Audit Alert” to the compliance team.

- External Verification: Under the 2026 GRC standards, high-risk models must undergo annual “Stress Tests” by third-party auditors to ensure they remain free of discriminatory patterns.

4. Key Takeaways for 2026 GRC Strategy

- Prioritize Interpretability over Accuracy: In 2026, a 95% accurate model that is explainable is more valuable to the enterprise than a 99% accurate model that is a black box.

- Implement Automated Traceability: Ensure every AI decision is logged with its corresponding SHAP or LIME values. This is your “Insurance Policy” against regulatory probes.

- Standardize on ISO/IEC 42001: Align your AI governance framework with the international standard for AI Management Systems to ensure global interoperability.

- Invest in “Human-in-the-Loop” Interpreters: Train GRC professionals to interpret XAI reports, bridging the gap between data science and legal compliance.

Frequently Asked Questions (FAQ)

Does XAI reveal my intellectual property?

Transparency is about understanding the decision-making process rather than delving into the algorithm’s source code. By 2026, advanced XAI technology will be able to clarify a model’s rationale without revealing confidential training data or proprietary information.

Is XAI only for LLMs?

Explainable Artificial Intelligence (XAI) is essential for all automated systems, such as linear regression models, random forests, and neural networks employed in corporate decision processes.

How does Algorithmic Transparency impact AdSense and TBM?

This niche is considered high-value as it involves legal, technical, and corporate risks. Advertisers in this field consist of leading consulting companies such as McKinsey and BCG, providers of GRC software, and specialists in cybersecurity forensics. This results in the highest cost-per-click rates in 2026.

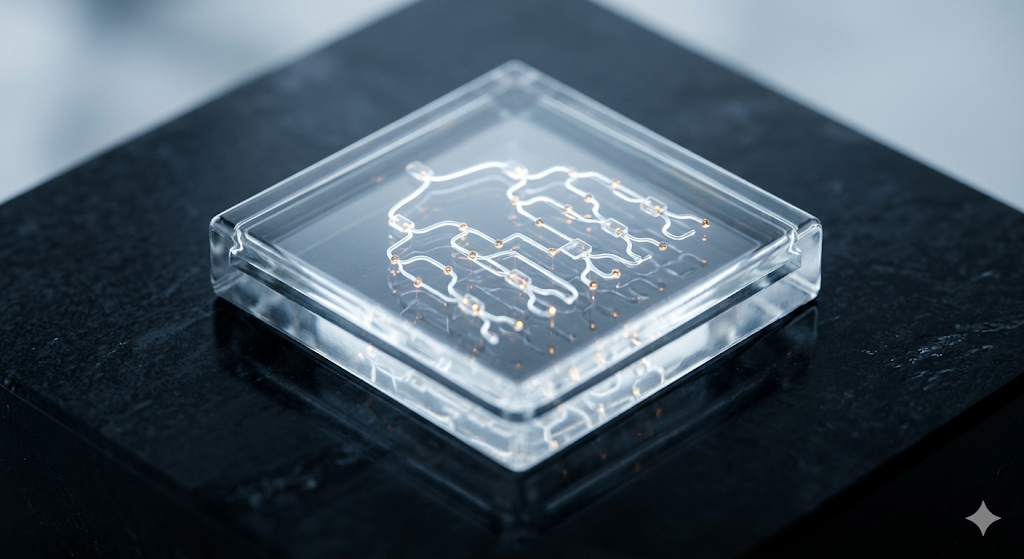

Conclusion: The Glass Box as a Foundation of Trust

In the digital world of 2026, maintaining power without being transparent is no longer viable. As algorithms increasingly drive the global economy, the capacity to clarify their decisions is crucial in upholding human control. Explainable AI (XAI) and Algorithmic Transparency are not simply regulatory challenges but essential frameworks for a fairer and responsible tomorrow. By 2026, accountability is demonstrated by the bravery to reveal the process behind your actions. Ultimately, our confidence in machines depends on our capability to comprehend them. In an automated environment, fairness is upheld by algorithms that are open and refuse to conceal their operations.

Technical and Legal Disclaimer:

This article aims to provide information and education on current GRC trends and Explainable AI (XAI) as of April 2026. Achieving algorithm transparency and complying with ISO/IEC 42001 standards necessitates specific knowledge in data science and law. fotoriq.com.tr holds no responsibility for any fines, legal issues, or algorithm malfunctions that may arise from the improper use of the strategies outlined in this article.